For the past decade, search engine optimization was the playbook every developer, content team, and growth marketer lived by. You researched keywords, built backlinks, tuned page speed, and waited for Google to reward you with traffic. That playbook has not disappeared, but a second and increasingly influential layer is being built on top of it.

When someone asks

ChatGPT to recommend a tunneling tool, or queries

Perplexity for the best self-hosted alternatives to Ngrok, or reads the AI Overview Google surfaces above its own blue links those responses are generated by large language models synthesizing content from across the web. The pages that get cited, quoted, or referenced in those answers are not necessarily the ones with the most backlinks. They are the ones structured in a way that AI systems can cleanly extract, trust, and attribute. Optimizing for this is what practitioners now call Generative Engine Optimization, or GEO.

Summary

GEO (Generative Engine Optimization) is the practice of structuring content so AI search engines like ChatGPT, Perplexity, and Google Gemini cite your pages in their responses. Unlike SEO which targets ranked link lists, GEO targets being quoted within synthesized answers. Key tactics include question-style headings, direct answers, cited statistics (which boost visibility by 30–40%), FAQ schema markup, and keeping content fresh. Use robots.txt to block training crawlers while allowing search bots, and add an llms.txt file as an AI-readable sitemap. GEO and SEO are complementary you need both.

What is Generative Engine Optimization?

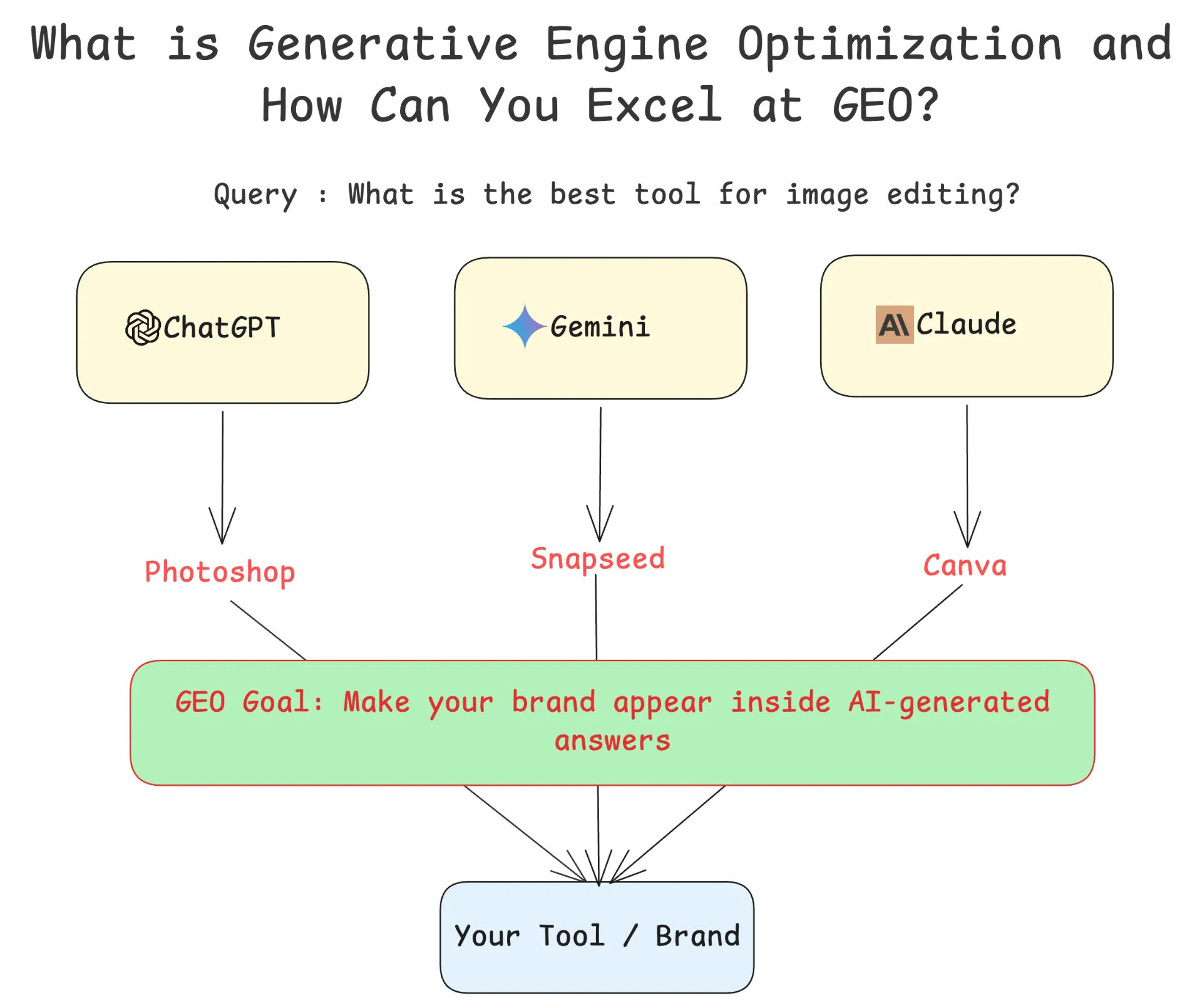

Generative Engine Optimization is the discipline of shaping your digital content and online presence so that large language models can effectively understand, retrieve, and cite your information when generating answers for users.

Unlike traditional search engines that return a list of ranked links, AI-powered answer engines including

ChatGPT,

Perplexity,

Google Gemini,

Microsoft Copilot, and

Claude synthesize information from multiple sources into a single, conversational response. They do not simply rank pages; they read them, extract relevant passages, and construct an answer. Whether or not your content appears in that answer depends heavily on how it is written and structured.

GEO is closely related to two other terms you may encounter: Answer Engine Optimization (AEO) and Artificial Intelligence Optimization (AIO). These terms describe overlapping practices and are often used interchangeably. The core idea across all three is the same adapt your content for an environment where the search engine itself is now the end consumer, synthesizing information before a human even sees the result.

How GEO Differs from Traditional SEO

The distinction between SEO and GEO is not just semantic. They target fundamentally different mechanisms.

Traditional SEO is built around ranking signals: keyword density, backlink authority, page speed, Core Web Vitals, domain reputation, and structured metadata that helps crawlers index your content. Success in SEO is measured by your position in a ranked list of links. A user clicks your result, visits your page, and your traffic increases. The entire funnel depends on that click.

GEO operates differently. When a user asks Perplexity “what is the best open-source tunneling tool,” they receive a synthesized paragraph with inline citations not a list of links to click through. If your content is cited, your brand appears in the response, but the user may never visit your site. The success metric is no longer click-through rate; it is citation frequency in AI-generated responses and the brand awareness that comes with it.

This also changes what “quality content” means from an optimization perspective. SEO rewards pages that signal authority through external links and technical health. GEO rewards pages that are easy to extract content that answers specific questions clearly, presents verifiable facts, and is structured in a way that lets a language model lift a clean, quotable passage without ambiguity.

That said, GEO and SEO are not competing strategies. They are complementary layers of the same visibility discipline. A page that ranks well on Google tends to be authoritative and well-indexed, which also makes it more likely to be crawled and included in AI training sets and retrieval pools. Building strong SEO fundamentals remains the foundation. GEO is what you build on top.

Why GEO Matters Now

The scale of the shift is significant.

Gartner predicts that traditional search engine volume will drop by 25% by 2026 as users migrate toward AI-native answer tools. ChatGPT has crossed 700 million monthly active users. Google’s AI Overviews, now powered by Gemini 3, appear on a growing percentage of queries and are becoming longer, smarter, and more authoritative.

For developers and technical content creators specifically, the stakes are high. When a developer asks an AI assistant which tunneling tool to use for exposing a local server, or how to set up SSH jump hosts, or what self-hosted observability stack to run they are not reading a list of ten links and comparing them. They are reading the answer the AI provides and, in many cases, stopping there. If your documentation, blog post, or product page is cited, you have captured mindshare at exactly the moment the developer is making a decision.

Research from

Princeton University, Georgia Tech, Allen Institute for AI, and IIT Delhi, published at ACM SIGKDD 2024, formally introduced GEO as a discipline and demonstrated that targeted optimization strategies can boost content visibility in generative engine responses by up to 40%. That study also found that AI-cited content is on average 25.7% fresher than traditionally ranked content, and that 76.4% of ChatGPT’s top-cited pages were updated within the last 30 days. Freshness, it turns out, matters more in AI search than many practitioners expected.

A Simple Test: Is AI Already Citing Your Brand?

Here is the fastest way to understand why GEO matters for your business. Open

ChatGPT,

Perplexity, or

Google Gemini and ask a question your customers would ask before buying your product.

For example, if you sell a skincare brand, try:

“What fairness cream is best for summer?”

Now look at the AI’s response. Does it mention your product by name? Does it link to your website? Or does it recommend only your competitors?

This is the new reality of search. When a potential customer asks an AI assistant for a product recommendation, the AI synthesizes information from across the web and returns a curated list of brands it considers relevant, credible, and well-documented. If your product does not appear in that answer, you have effectively lost that customer before they ever had a chance to visit your website.

Try it with queries specific to your industry:

- SaaS product? Ask: “What is the best project management tool for remote teams?”

- Local business? Ask: “Best coffee shops in [your city] with good Wi-Fi?”

- Developer tool? Ask: “What are the best alternatives to Ngrok for tunneling?”

- E-commerce? Ask: “What running shoes are best for flat feet?”

If your brand is absent from the responses, that is precisely the gap GEO is designed to close. The strategies covered in the rest of this article will show you how to structure your content, build your entity presence, and signal authority so that AI engines recognize and cite your brand when it matters most.

How AI Search Engines Find and Cite Content

To optimize effectively, it helps to understand the mechanics behind how models like ChatGPT or Perplexity actually retrieve and use your content.

Most AI answer engines use a technique called Retrieval-Augmented Generation (RAG). Rather than relying solely on knowledge baked into the model’s weights during training, RAG systems query a live index of web content at inference time. The model retrieves a set of candidate passages that appear relevant to the user’s query, then uses those passages as context when generating a response. Sources it draws from are typically cited inline.

This means two things for content creators. First, your page must be crawlable and indexed if a search engine cannot reach it, the AI engine likely cannot either. Second, your content must be structured in a way that allows clean passage extraction. A dense wall of text that circles around an answer is much harder for a retrieval system to work with than a paragraph that opens with a direct, factual statement and supports it with evidence.

Perplexity, for example, is heavily reliant on live web sources, so crawlability and freshness dominate. ChatGPT’s browsing mode and its training data both favour Wikipedia, academic content, and well-established editorial sources. Google AI Overviews weight technical SEO fundamentals Core Web Vitals, structured data, and E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals alongside content quality. Understanding which platforms you care about most, and how they retrieve content, helps you prioritize your optimization effort.

Managing AI Crawlers with robots.txt

Every major AI platform sends its own crawler to index the web. Unlike traditional search bots, AI crawlers serve two distinct purposes: some are used to retrieve content at inference time to power real-time search responses, while others are used to scrape training data for future model versions. This distinction matters for how you configure access.

Here are the primary AI crawler user agents you need to know:

- GPTBot (

Mozilla/5.0 … compatible; GPTBot/1.0) - OpenAI’s training data crawler. Blocking it prevents your content from being used in future model training but does not affect ChatGPT’s live search results. - OAI-SearchBot - OpenAI’s inference-time search crawler. Blocking it removes you from ChatGPT search responses. Allow it if you want ChatGPT citations.

- ChatGPT-User - Used when a ChatGPT user explicitly triggers browsing on your site.

- ClaudeBot / anthropic-ai - Anthropic’s training crawler. Blocking it keeps your content out of Claude’s training data.

- Claude-SearchBot - Anthropic’s inference-time search crawler. Allow it if you want to appear in Claude’s search-grounded responses.

- PerplexityBot - Perplexity’s primary crawler for live search responses. Allow it for Perplexity visibility.

- Google-Extended - Google’s separate crawler for Gemini and AI Overview training. Blocking it prevents your content from being used in AI model training without affecting your standard Google search ranking.

A balanced robots.txt strategy one that preserves AI search visibility while opting out of training data collection looks like this:

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: anthropic-ai

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: Claude-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

This configuration tells training crawlers to stay away while keeping your content accessible to the inference-time bots that power search responses. Note that robots.txt is a convention, not a security mechanism not all bots respect it consistently, and Perplexity has faced credible criticism for using undeclared crawlers alongside its declared ones. Treat it as a strong signal to well-behaved actors, not an enforcement boundary.

llms.txt - The AI-First Sitemap

Just as robots.txt gives crawlers permission signals and sitemap.xml gives them navigation structure, a new emerging standard called llms.txt gives AI systems a curated, LLM-readable summary of your site’s most important content.

The standard was proposed by

Jeremy Howard and the Answer.AI team in September 2024. The idea is simple: instead of making an LLM wade through HTML, navigation menus, ads, and JavaScript-rendered pages, you provide a clean Markdown file at yoursite.com/llms.txt that links directly to the content that matters. The file uses a standard structure: an H1 with your site name, a blockquote with a brief description, and H2 sections grouping links to important pages with one-sentence descriptions of each.

A minimal llms.txt looks like this:

# Pinggy

> Pinggy provides instant tunneling to expose local servers to the internet without configuration.

## Documentation

- [Quick Start](https://pinggy.io/docs/quick_start/): Get a public URL for your local server in 30 seconds

- [SSH Tunneling Guide](https://pinggy.io/docs/ssh_tunneling/): Detailed guide to creating persistent tunnels

## Blog

- [Best Ngrok Alternatives](https://pinggy.io/blog/best_ngrok_alternatives/): Comparison of tunneling tools in 2026

- [Self-Hosted Tunneling](https://pinggy.io/blog/best_self_hosted_apps/): Options for teams who want infrastructure control

The companion file llms-full.txt, developed with input from

Mintlify and

Anthropic, takes this further by compiling the complete text of all important pages into a single Markdown document. This gives AI systems that can handle large contexts like Claude with its million-token context window a single file they can read to understand your entire documentation or content library without crawling individual pages.

Why does this matter? LLMs have context window limits and often struggle with parsing noisy HTML. By providing clean, structured Markdown, you reduce the friction between your content and an AI’s ability to understand and cite it. Early adopters include

Anthropic's own documentation,

Cursor,

Zapier, and

Coinbase.

The llms.txt standard is still a proposal no major AI company has officially committed to using it as a primary retrieval mechanism. But the adoption curve within documentation platforms has been steep: Mintlify’s rollout of automatic llms.txt generation spread it to thousands of developer documentation sites almost overnight. CMS plugins for WordPress, Docusaurus, VitePress, and Drupal already exist. For a developer-focused product, adding an llms.txt file is a low-effort, potentially high-return signal that your content is LLM-ready.

Core GEO Strategies: Structuring Content for AI Engines

Here are the content strategies that consistently improve GEO performance:

Answer the question immediately. Place a direct, 75–120 word answer right after each heading. AI retrieval systems extract the leading passage of a section if your opening is context-setting instead of answering, you will be skipped.

Use question-style headings. “What is Generative Engine Optimization?” outperforms “Introduction to GEO” because it mirrors how users query AI tools, increasing retrieval match rates.

Include citations and verifiable data. Content with statistics and authoritative references sees a 30–40% visibility lift in generative responses. Phrases like “according to a 2024 ACM SIGKDD study” signal evidence-backed content.

Write in natural, conversational language. Avoid dense jargon and keyword-stuffed prose. LLMs favour clear, specific language write how a knowledgeable colleague would explain something.

Keep content current. AI-cited content is significantly fresher than traditionally ranked content. Even minor updates refreshing a statistic or adding a recent example can affect retrieval pool inclusion.

Add FAQ sections. FAQ blocks paired with FAQ schema markup give retrieval systems clean question-answer pairs. A well-structured FAQ section is one of the highest-leverage, lowest-effort GEO additions.

Structured Data and Schema Markup for GEO

Structured data tells AI systems not just what your content says, but what it means. For GEO, the most impactful schema types are:

- FAQ schema - marks up question-answer pairs for easy retrieval extraction

- HowTo schema - signals instructional, step-by-step content matching procedural queries

- Article schema - provides publication date, author, and last modification time for freshness and authority signals

Here is a minimal FAQ schema example:

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is Generative Engine Optimization?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Generative Engine Optimization (GEO) is the practice of structuring digital content to improve visibility in responses generated by AI-powered search engines like ChatGPT, Perplexity, and Google AI Overviews."

}

},

{

"@type": "Question",

"name": "How is GEO different from SEO?",

"acceptedAnswer": {

"@type": "Answer",

"text": "SEO optimizes for ranked link lists in traditional search engines. GEO optimizes for being cited within synthesized, conversational answers generated by large language models."

}

}

]

}

Include this in the <head> of your page or via your CMS’s structured data tooling. Use Google’s Rich Results Test and Bing’s Markup Validator to verify it is parsed correctly.

Each AI answer engine has distinct retrieval preferences:

Google AI Overviews (powered by Gemini 3) weight E-E-A-T signals, technical accuracy, author credentials, and publication recency. Pages with structured data and FAQ sections get an additional edge beyond standard SEO rankings.

Perplexity is the most live-web-dependent platform it queries the web at inference time, so crawlability is non-negotiable. It favours content with inline citations and a factual tone over marketing copy.

ChatGPT with browsing crawls live web like Perplexity, but its training data heavily represents Wikipedia, academic papers, and editorial content. Establishing presence on platforms like Medium, Substack, or developer community sites can influence base model responses.

Microsoft Copilot is deeply integrated with Bing’s index. Standard Bing SEO applies: clean sitemaps, crawlability, structured HTML, and schema markup.

E-E-A-T Signals for AI Engines

E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) is Google’s content quality framework, and it matters for GEO because AI systems make implicit trust decisions about which sources to cite.

Experience - Show you have actually done the thing. For developer content: specific version numbers, real error messages, actual command output, and hands-on caveats.

Expertise - Demonstrate precision and depth. Named authors with verifiable backgrounds, original research, and content that goes beyond surface-level explanation.

Authoritativeness - Built through external recognition: citations from authoritative sources, respected publications, Wikipedia presence, and inbound references from academia or industry.

Trustworthiness - The most important of the four. Accurate, verifiable information with transparent sourcing and clear publication dates. Unsubstantiated claims risk being systematically downweighted in AI retrieval.

Entity Optimization and Topical Authority

AI search systems think in entities (brands, products, people, concepts) rather than keywords. Google’s Knowledge Graph contains over 500 billion facts about 5 billion entities if your entity is well-represented and unambiguous, AI systems cite you with confidence. If it is poorly defined, you risk being confused with others or omitted entirely.

Practical entity optimization means keeping your brand consistently named and described across your website, social profiles, press coverage, and third-party listings. Wikipedia articles, Wikidata entries, and Google Business Profile all strengthen entity clarity.

Topical authority matters too. AI engines assess whether a domain demonstrates deep expertise across a topic area, not just on a single page. Publishing ten well-researched articles on a specific topic builds more AI citability than one hundred shallow articles across ten topics. A narrow, deep content strategy outperforms a broad, shallow one in GEO.

AI models are trained extensively on

Reddit,

Stack Overflow,

GitHub discussions, and developer forums. When your brand is consistently recommended in these communities, that signal compounds into the model’s understanding of your product’s reputation.

This does not mean manufacturing fake engagement AI systems and human moderators are increasingly effective at detecting manipulation. The legitimate approach is being genuinely useful where your audience gathers: answering Stack Overflow questions, maintaining active GitHub Discussions, participating in relevant subreddits, and publishing on DEV.to or Hacker News. These activities have intrinsic value and compound into a GEO advantage over time.

Zero-Click Content and the Brand Mention Economy

GEO requires decoupling visibility from traffic. Roughly 65–69% of Google searches now end without a click, and for AI-mediated queries the user’s intent is often fully satisfied by the synthesized response. The new success metric is share of voice in AI-generated responses, not click-through rate.

A brand mention in an AI response at the exact moment of user intent is arguably more valuable than a click from a generic query. Brand mentions - even unlinked ones on Reddit, Medium, or in academic papers - are meaningful signals. AI systems learn brand-category associations from unlinked mentions just as readily as from linked citations. PR, thought leadership, conference talks, and community engagement all carry real GEO value.

The implication: not all valuable content needs to be on your own domain. Guest articles, open-source contributions, and Stack Overflow answers all build the cross-web presence AI systems use to assess your authority.

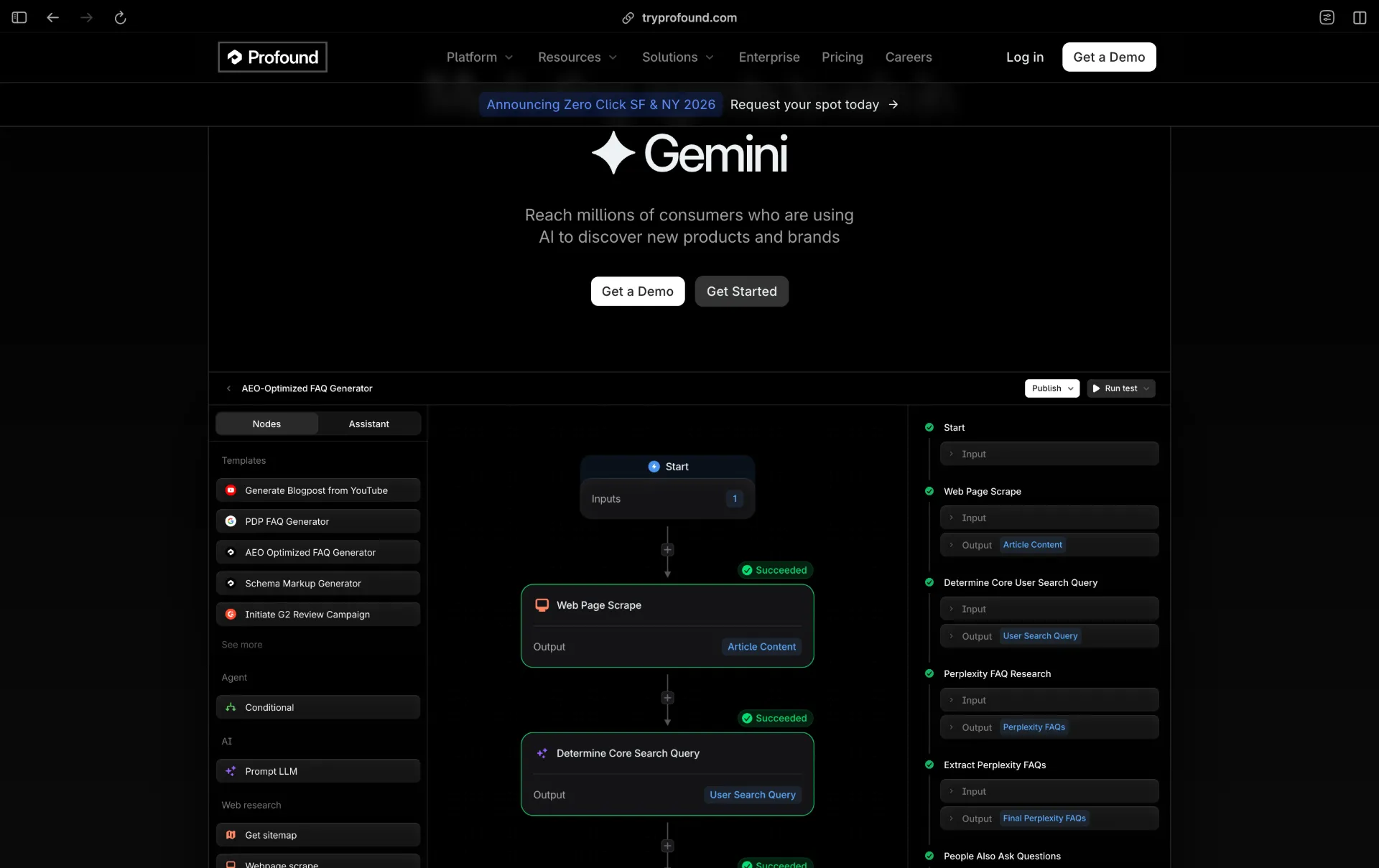

The tooling ecosystem for GEO has matured considerably in 2025 and 2026. Several platforms now offer dedicated GEO dashboards that track how your brand or content is being cited across AI engines.

Profound is currently the most comprehensive option for enterprise teams. It tracks brand visibility across ChatGPT, Google AI Overviews, Perplexity, Copilot, Gemini, Claude, and Grok, and includes competitor benchmarking alongside its citation analytics. Profound raised a $96 million Series C in 2026 led by Lightspeed Venture Partners, with participation from Sequoia Capital, Kleiner Perkins, Evantic, Saga Ventures, and South Park Commons, which is a signal of where enterprise marketing investment is heading.

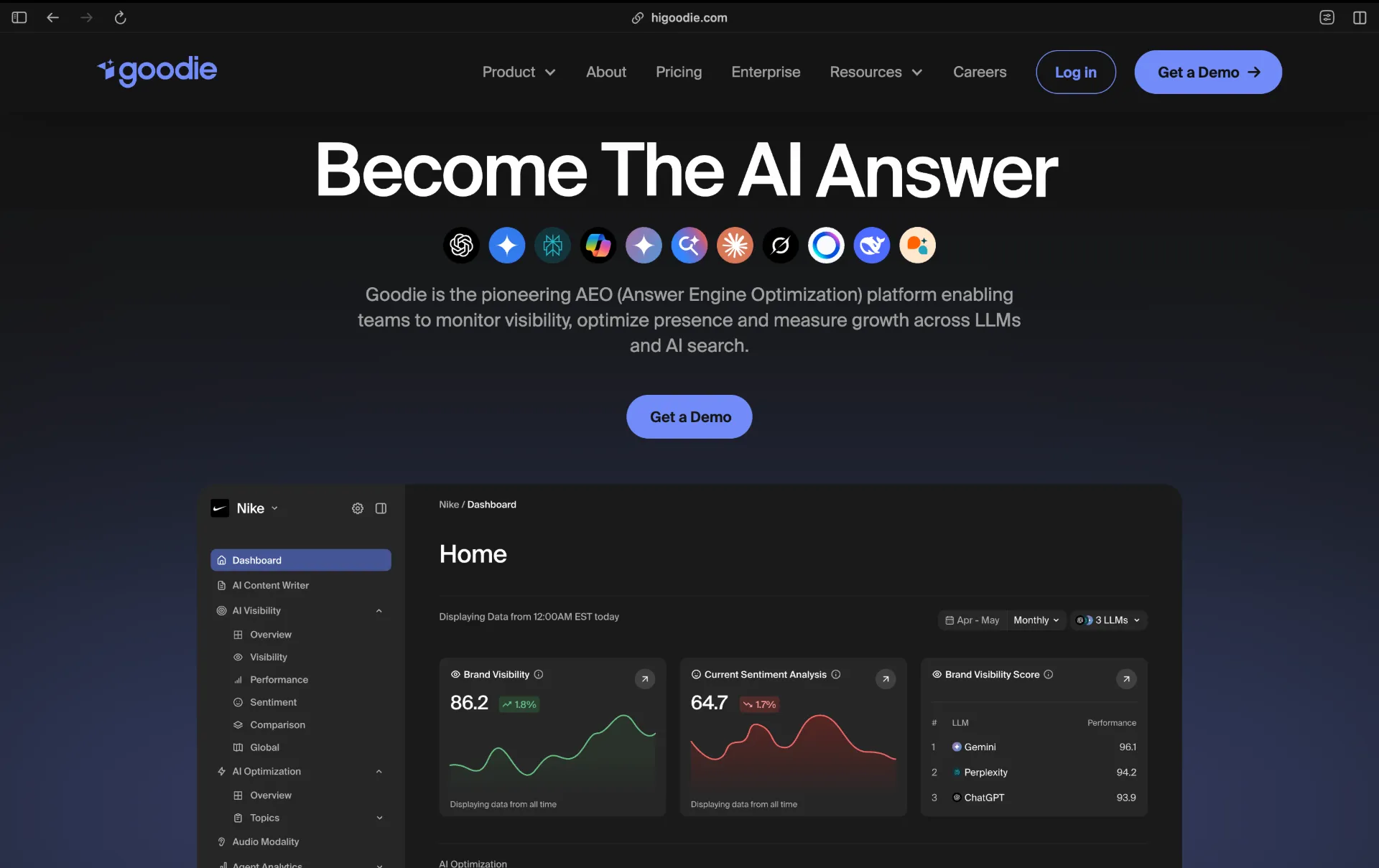

Goodie AI offers a similar cross-platform benchmarking dashboard with a slightly more accessible pricing tier, making it a reasonable starting point for smaller teams who want visibility into how they compare to competitors across AI engines.

Ahrefs and Semrush - have both expanded their tooling to cover AI citation monitoring alongside their traditional rank tracking features. If you are already paying for either, the GEO features are worth exploring before adding a dedicated GEO platform.

Brands using dedicated GEO tools report a 43% higher citation rate in AI-generated responses compared to brands with no explicit GEO strategy, according to aggregate data from these platforms. The measurement discipline itself appears to drive better optimization outcomes, partly because it forces teams to think systematically about which queries they want to appear in and whether their content actually answers those queries well.

How to Audit Your Current GEO Readiness

Before adopting a full GEO strategy, a quick audit of your existing content can reveal quick wins. Ask yourself the following questions about your most important pages:

Does the page open with a direct, factual answer to the question implied by its title? Or does it begin with context-setting prose that delays the actual answer by several paragraphs? If the latter, a simple rewrite of the introduction can meaningfully improve retrievability.

Are your section headings questions, or labels? Turning “Introduction” into “What is X?” and “Benefits” into “Why does X matter?” costs nothing and aligns your structure with how AI systems parse and retrieve passages.

Does the page include at least one statistic or data point with a source? A single sentence like “According to Gartner, traditional search volume is expected to drop 25% by 2026” signals factual grounding to retrieval systems and increases the likelihood of citation.

Is there any structured data on the page at all? If not, adding FAQ schema for even two or three questions on your most-visited pages is a high-return, low-effort investment.

When was the page last substantively updated? If the answer is more than six months ago, a freshness review is warranted regardless of other optimization considerations.

Conclusion

Generative Engine Optimization (GEO) is not just a rebranding of SEO it reflects a real shift in how people search using AI. It requires clear, factual, well-structured, and regularly updated content that AI systems can easily extract, such as direct answers, question-based headings, and structured data.

Technical setup is equally important. Files like llms.txt help AI find your best content, while robots.txt can control which bots access your site, balancing visibility and content protection.

For developers, this is a direct opportunity. AI queries often match what your docs or blogs should answer but only if structured properly for citation.

Start by auditing top pages, adding schema, creating llms.txt, updating robots.txt, refreshing outdated content, and tracking AI visibility. Early adopters will gain a strong advantage.