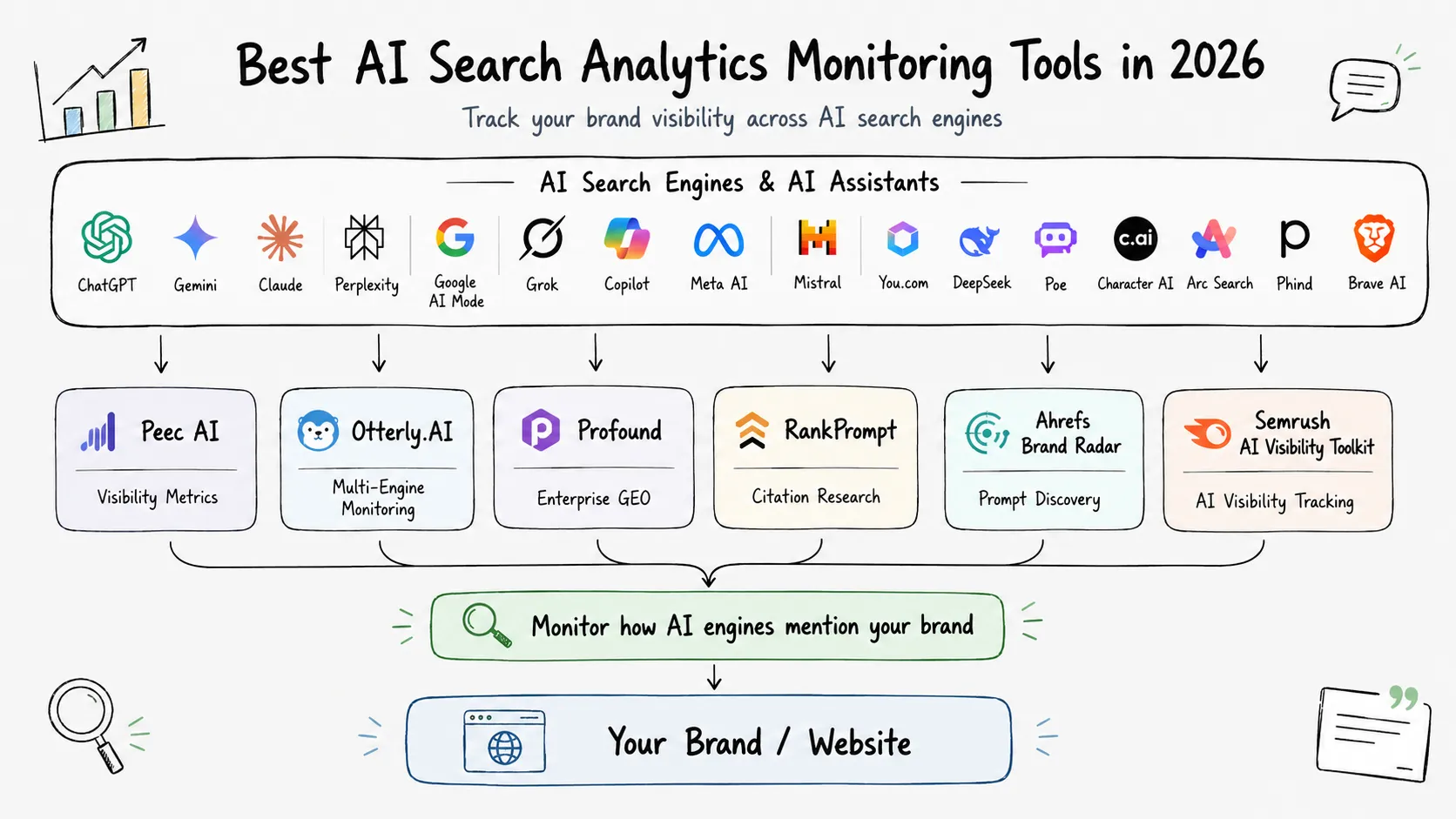

AI search visibility is now a separate analytics problem from classic SEO. A page can rank on Google and still be absent from ChatGPT, Gemini, Perplexity, or AI Overviews. That is why teams now track two layers at once: search rankings and AI answer visibility.

If you are evaluating tools in this category, the most important shift is to focus less on vanity dashboards and more on repeatable monitoring loops: prompt coverage, mention rate, citation quality, sentiment, and competitor share of voice over time. This guide covers the most practical tools for that workflow, including the ones you listed: Otterly, Peec, Profound, and RankPrompt.

Summary

- If your team wants a focused GEO workflow with clear visibility metrics (visibility, position, sentiment), start with Peec AI.

- If you want broad multi-engine monitoring plus content audit and crawlability checks, consider Otterly.AI.

- If you run enterprise-scale AEO programs with dedicated content and brand teams, Profound is a strong fit.

- If you want an all-in-one stack that combines AI monitoring, citation research, and outreach in one place, evaluate RankPrompt.

- For search-backed prompt-scale research and competitive discovery, add Ahrefs Brand Radar to your stack.

- If you already run Semrush, the AI Visibility Toolkit is a practical extension for AI-layer tracking.

- If your priority is enterprise answer share and citation intelligence with AI-agent traffic context, check Scrunch.

| Tool | Best For | What Stands Out | Watch Out For |

|---|

| Peec AI | Marketing and SEO teams that need clean AI visibility reporting | Visibility, position, sentiment, source-level analytics, daily refresh cadence | Teams still need an execution process outside the dashboard |

| Otterly.AI | GEO-focused teams that want monitoring plus optimization workflow | Prompt research, citation tracking, sentiment, crawlability and content audit features | Rich feature set can feel broad for small teams early on |

| Profound | Enterprise AEO and cross-functional marketing programs | Answer Engine Insights, Prompt Volumes, Agent Analytics, and agent workflows | Best value appears when teams can act on data at scale |

| RankPrompt | Teams that want monitoring + content + outreach in one workflow | Coverage across major AI engines with integrated tools and location/language support | Validate feature depth against your exact workflow before standardizing |

| Ahrefs Brand Radar | Large-scale competitive research and discovery | Massive search-backed prompt index and fast zero-setup exploration | You still need a downstream optimization playbook |

| Semrush AI Visibility Toolkit | Teams already invested in Semrush | AI visibility monitoring integrated with broader SEO and reporting stack | Need to evaluate limits and add-ons against your prompt volume |

| Scrunch | Enterprise teams focused on answer share and citation strategy | Answer-share framing, prompt and citation monitoring, AI-agent traffic context | More useful when content and web teams can execute quickly |

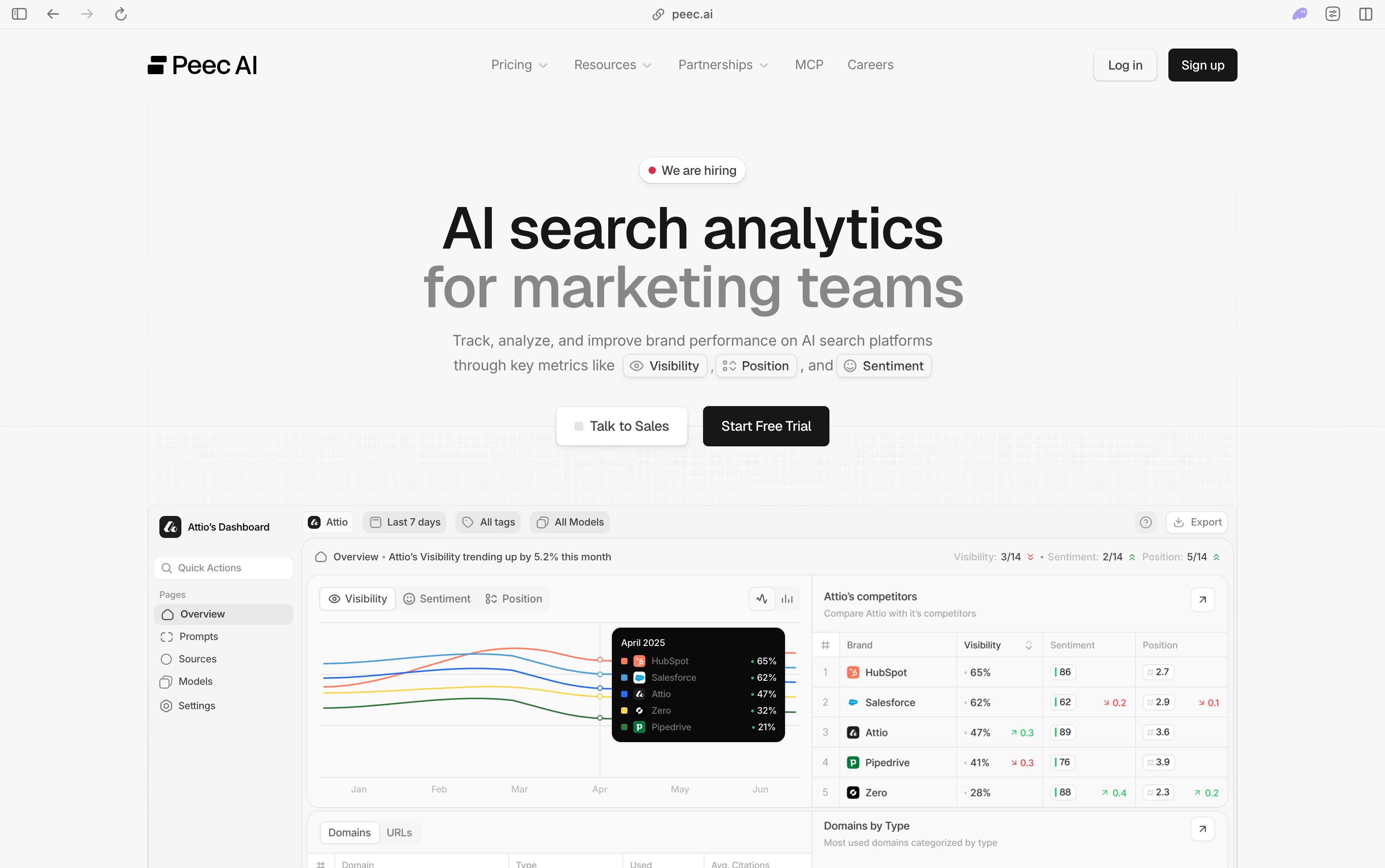

1. Peec AI

Peec AI is one of the cleaner tools for teams that want to monitor AI visibility without adding unnecessary complexity. Its core model is straightforward: track brand visibility, position, and sentiment across AI search platforms, then connect those metrics to source-level evidence so strategy is based on what models actually cite.

A practical advantage is its split between brand visibility and source visibility. This is important because many teams discover they are cited as a source but not mentioned as a brand, or mentioned as a brand but not cited. Peec also documents daily execution cadence and Looker Studio integration, which helps teams that already run weekly reporting workflows.

During evaluation, pay attention to segmentation quality. You should be able to group prompts by funnel stage, product line, and region, then compare movement week over week without rebuilding reports each time. If the workflow to create those slices is smooth, Peec can become a reliable weekly decision layer for both SEO and content teams.

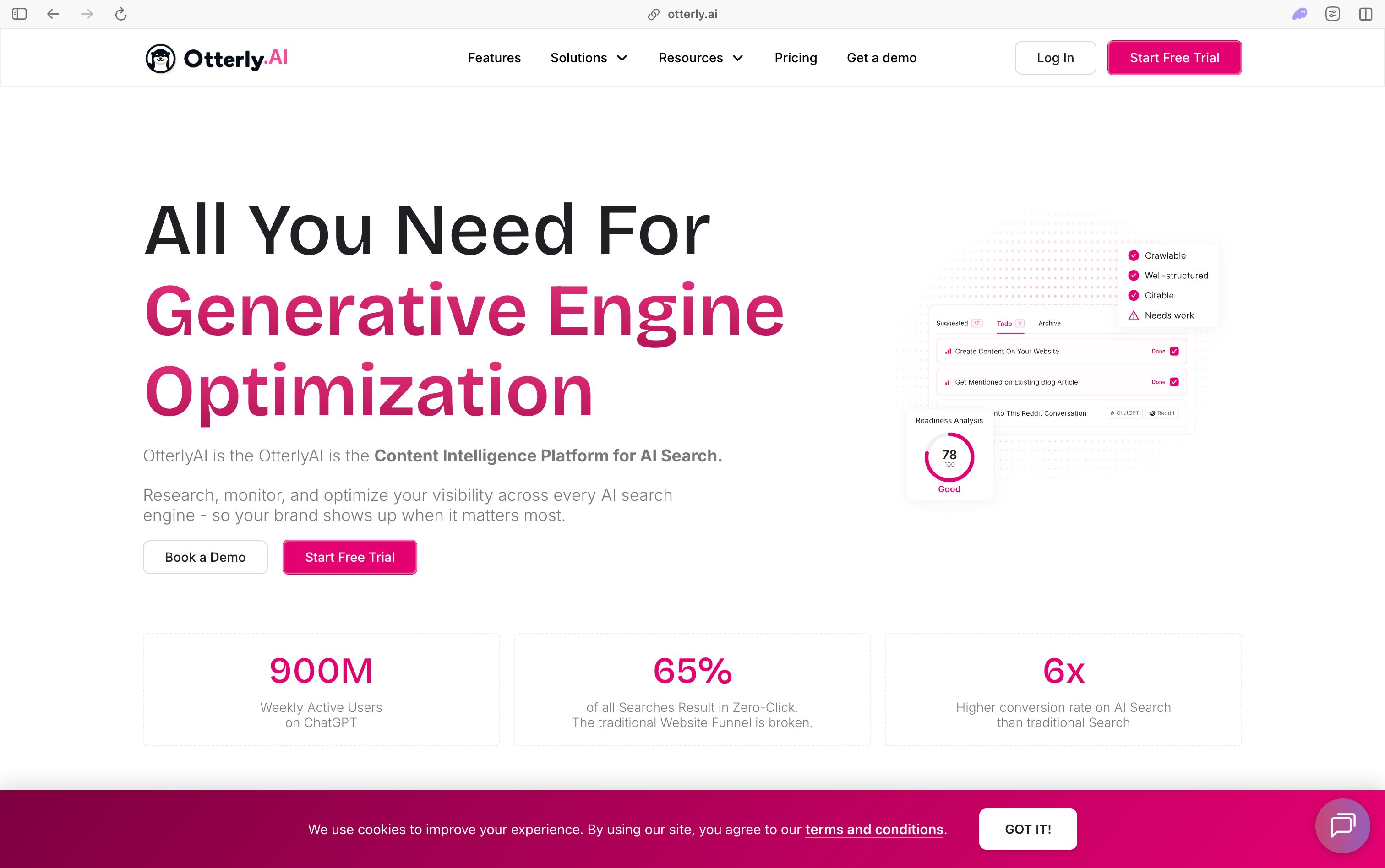

2. Otterly.AI

Otterly.AI positions itself as an end-to-end GEO platform rather than just a monitor. Its workflow typically starts with prompt research, then AI search analytics, and finally optimization modules like content audit and crawlability checks. That is useful when your team wants recommendations in the same place as monitoring data.

Otterly also emphasizes citation and domain tracking over time, including sentiment and visibility index views. For teams that need to track visibility across ChatGPT, Gemini, Perplexity, Copilot, AI Overviews, and AI Mode from one interface, it can be a practical operational hub.

The key question in trials is whether recommendations are directly actionable. A good setup should let you move from a visibility drop to a concrete action list, such as refreshing a source page, improving internal links, or fixing indexability friction. If your team lacks bandwidth to stitch together multiple tools, this all-in-one workflow can save real execution time.

3. Profound

Profound is built around an enterprise AEO operating model. The platform groups capabilities into Answer Engine Insights, Prompt Volumes, and Agent Analytics, then layers autonomous agents for content and marketing workflows. This structure is usually a better fit for larger teams with defined ownership across SEO, content, and brand.

A meaningful differentiator is its emphasis on both visibility and technical interpretation of how AI systems crawl and interpret your site. If your team already has internal execution capacity and needs cross-functional alignment around AI search, Profound is a serious option.

For enterprise buyers, implementation depth matters as much as features. Confirm how well the platform supports cross-team workflows, reporting handoffs, and governance for large prompt sets across business units. Profound tends to deliver most value when there is an existing operating cadence to turn insights into shipped updates every week.

4. RankPrompt

RankPrompt combines AI visibility monitoring with adjacent execution tools such as citation research, audits, and outreach. It highlights coverage across major AI surfaces and promotes a unified dashboard model instead of separate point tools.

For smaller teams that prefer one system over multiple integrations, that can reduce operational overhead. It is also worth noting its positioning around location and language tracking, which is useful when regional visibility differences materially affect pipeline.

The practical advantage here is workflow compression: discovery, monitoring, and execution planning can happen in one place. In pilot mode, validate whether each module is strong enough for your exact use case, especially if you run multi-market programs. If quality is consistent across modules, RankPrompt can reduce context-switching for lean growth teams.

5. Ahrefs Brand Radar

Ahrefs Brand Radar is strong when your first need is discovery and competitive mapping at scale. The product is built around a very large search-backed prompt index and supports fast exploration without heavy setup, which is valuable for research teams and agencies handling many brands.

As of May 2026, Ahrefs markets Brand Radar with hundreds of millions of monthly prompts across AI platforms. In practice, this makes it particularly useful for topic discovery, competitive share-of-voice comparisons, and identifying citation opportunities before you move into content execution.

Think of Brand Radar as a high-leverage research input, not a full operating system for GEO execution. It excels at showing where your brand is absent and where competitors are winning narrative space. Most teams pair these insights with a separate content and technical workflow to close identified gaps.

Semrush AI Visibility Toolkit is a practical extension for teams already using Semrush for SEO operations. It includes visibility benchmarking, mention and sentiment analysis, prompt/topic discovery, daily prompt tracking, and technical checks related to AI crawler access.

The operational benefit is integration with the rest of the Semrush ecosystem. Instead of creating a separate reporting stack, teams can incorporate AI visibility into existing dashboards and workflows. According to Semrush documentation as of May 2026, access limits and add-ons vary by plan, so confirm volume needs before rollout.

This is often the lowest-friction option for organizations that already depend on Semrush for rank tracking and site health. Data normalization, stakeholder reporting, and workflow adoption are usually easier when AI visibility metrics live inside existing processes. Before expanding usage, verify prompt limits, market coverage, and how quickly new prompt clusters can be onboarded.

7. Scrunch

Scrunch focuses on AI answer share and citation intelligence, with an enterprise-oriented framing around AI customer experience. The platform emphasizes prompt monitoring, citation opportunities, and competitive visibility trends, while also connecting this to AI-agent traffic behavior.

That makes Scrunch relevant for teams treating AI visibility as a direct acquisition channel, not just an SEO experiment. If your organization is actively measuring AI-origin traffic and attribution, this approach can fit well.

In practice, Scrunch is most useful when your organization already tracks pipeline impact from AI-driven journeys. The answer-share framing can help content, brand, and demand teams align on one scorecard instead of separate channel metrics. Prioritize integrations and attribution fit during evaluation so AI visibility insights connect to revenue reporting.

Tool selection gets easier when you decide your operating model first. If you are in discovery mode, prioritize breadth of prompt and competitor coverage. If you are already executing GEO programs, prioritize workflow depth: source diagnostics, action recommendations, and reporting integrations.

In other words, choose for the bottleneck you have now, not the stack you might want later. Most teams get better results with one primary platform and one lightweight validation process than by trying to run three platforms in parallel.

For strategy context around GEO foundations and experimentation quality, see

Generative Engine Optimization and

why LLM evaluation quality matters. If you are building an internal measurement loop, this

AI harness engineering guide is also useful.

What to Track in the First 90 Days

If you want to avoid noisy reporting, define a small KPI set and keep it stable for one quarter. A practical baseline is:

- Mention rate by prompt cluster (transactional, comparative, educational)

- Citation rate from trusted domains vs low-authority sources

- Average position in AI responses when your brand appears

- Sentiment trend by product line, not just at the brand level

- Competitor share of voice for your highest-intent prompts

This gives you enough signal to prioritize content and technical fixes without overfitting to day-to-day model fluctuations.

Common Mistakes Teams Make

The most common failure pattern is treating AI visibility as a reporting exercise instead of an execution loop. Teams gather dashboards but do not map each visibility gap to a specific page update, schema improvement, or crawlability fix.

Another frequent issue is changing prompt sets too often. Keep a fixed core set for longitudinal comparison, then run experiments in a separate sandbox set. This preserves data quality and makes weekly trend analysis more trustworthy.

Conclusion

AI search analytics is now a core monitoring function, not an optional experiment. The winners in this category are not the tools with the most graphs, but the ones that help your team move from visibility data to shipped improvements every week.

If you are just starting, pick one primary platform, define a fixed prompt set, and run a weekly execution cadence for eight weeks before changing tools. Consistency is still a bigger advantage than feature breadth.