How to Make Your Website Agent-Ready for AI Search in 2026

Updated on May 11, 2026 · 7 mins read

If your site is still optimized only for blue-link SEO, you are leaving visibility on the table. In 2026, a large chunk of discovery starts in AI interfaces such as ChatGPT Search and Google AI features, where users often get an answer with citations before they ever open a search results page.

The good news is that “agent-ready” is not a mysterious new stack. It is mostly solid technical SEO plus better content structure, explicit crawler policy, and faster update signals. In this guide, you will get a practical implementation path you can ship.

Summary

- Google says AI features still rely on core SEO fundamentals, so start with crawlability, indexability, and clear page structure: Google AI features guidance.

- For ChatGPT Search inclusion, allow

OAI-SearchBot; blockGPTBotonly if you want to opt out of training on your content: OpenAI crawler docs and ChatGPT Search help. - Add valid schema markup because it helps systems understand page meaning explicitly: Google structured data intro.

- Keep

sitemap.xmlcurrent, submit in Search Console, and optionally use IndexNow for faster URL updates: Google sitemap docs and IndexNow docs. - Add a quick readiness check with Agent Readiness Scanner and then fix surfaced issues.

- A review of major GEO guides from 2025-2026 shows the same pattern: technical SEO first, extractable content second, and strong entity trust signals third.

What “Agent-Ready” Means in Practice

As of May 10, 2026, the most useful framing is simple: your site is agent-ready when AI systems can crawl it, understand it, trust it, and extract the right part of it quickly. That means you do not need to rebuild your website for AI, but you do need to remove ambiguity.

Google's AI-features documentation explicitly says there are no extra technical requirements just for AI Overviews and AI Mode beyond standard search requirements. At the same time, it also emphasizes baseline practices such as allowing crawling in robots.txt, making content discoverable through internal links, and keeping important content in text form. So the operational target is still disciplined SEO execution, now with stronger emphasis on extraction quality.

Step 1: Keep Important Pages Crawlable and Indexable

Start with the basics because every other step depends on this. If key pages are blocked, malformed, or poorly linked, they will underperform in both classic and AI search.

Use a minimal and explicit robots.txt:

User-agent: *

Allow: /

Sitemap: https://example.com/sitemap.xml

Google’s crawler docs also confirm that the sitemap field in robots.txt is supported by major engines. Keep this file short, explicit, and version-controlled so accidental blocks do not slip into production.

Step 2: Set AI Crawler Policy Intentionally

OpenAI's crawler docs separate search crawling from training crawling. That distinction matters. OAI-SearchBot is used for ChatGPT search inclusion, while GPTBot is tied to potential training use cases.

A common policy for teams that want search visibility but tighter training control looks like this:

User-agent: OAI-SearchBot

Allow: /

User-agent: GPTBot

Disallow: /

OpenAI's Help Center also notes there is no guaranteed top ranking in ChatGPT Search. Allowing crawling is an inclusion prerequisite, not a ranking guarantee.

Step 3: Add Structured Data for Clear Machine Interpretation

Google's structured data documentation states that structured markup gives explicit clues about page meaning. This is exactly what you want for AI retrieval and citation workflows.

At minimum, implement the schema type that matches the page intent, such as Article, FAQPage, HowTo, Product, or Organization. Keep markup aligned with visible content, and validate before shipping.

How-To Schema Example (JSON-LD)

Use this pattern for procedural pages:

{

"@context": "https://schema.org",

"@type": "HowTo",

"name": "Make your website agent-ready",

"step": [

{

"@type": "HowToStep",

"name": "Allow crawling",

"text": "Ensure important pages are crawlable and linked internally."

},

{

"@type": "HowToStep",

"name": "Publish structured data",

"text": "Add valid JSON-LD matching the page's visible content."

},

{

"@type": "HowToStep",

"name": "Monitor and refresh",

"text": "Track visibility and update core pages regularly."

}

]

}

Step 4: Keep Sitemaps Fresh and Push URL Changes Fast

Google's sitemap docs are clear that submitting a sitemap is a hint, not a guarantee, but it remains foundational for reliable discovery. Keep your sitemap generation automated and ensure canonical URLs are included.

If your site changes frequently, add IndexNow on top. IndexNow documentation supports batch notifications of up to 10,000 URLs per POST, which can reduce lag between publishing and discovery for participating engines.

Step 5: Add llms.txt as an Optional AI-First Layer

Google does not require any new machine-readable AI file for inclusion in Google AI features. That said, llms.txt is emerging as a useful optional layer for developer docs and knowledge-heavy sites.

The llms.txt spec defines a root-level Markdown file with a consistent structure (/llms.txt), designed to make high-value links easier for language models and agents to interpret.

A minimal example:

# Example Docs

> Official documentation and implementation guides.

## Core Guides

- [Quick Start](https://example.com/docs/quick-start): Setup in under 5 minutes

- [API Reference](https://example.com/docs/api): Endpoints, auth, and examples

## Tutorials

- [Webhook Guide](https://example.com/docs/webhooks): End-to-end webhook setup

Treat this as a discoverability aid, not a replacement for clean HTML, structured data, or sitemaps.

Step 6: Write for Citation, Not Just Clicks

AI systems prefer answer-ready passages they can quote cleanly. That changes how you should structure important pages.

Use descriptive headings that mirror real user questions, open sections with direct answers, and then expand with detail. Keep claims source-backed and date-sensitive content visibly updated. This makes your content more likely to be selected as supporting evidence in generated answers.

If you want the broader strategy layer, pair this implementation guide with our GEO guide.

Step 7: Measure AI Search Performance Like an Engineering Loop

Google reports AI-feature traffic inside the standard Search Console Performance report under the Web search type, so you can start measuring without special tooling. For ChatGPT Search presence, run prompt-level checks and monitor referral patterns.

For richer tracking across models, use workflows like those in AI search analytics tools.

Step 8: Build Entity and Off-Site Trust Signals

One section many GEO articles mention, but many implementation guides skip, is entity clarity beyond your own website. AI systems do not only evaluate on-page quality; they also cross-check who you are across profiles, references, reviews, and third-party mentions. If your category, product positioning, or brand description changes across platforms, your trust signals become weaker.

Treat this as a consistency layer on top of technical SEO. Keep one canonical brand description, align it across your site and major public profiles, and make organization and author identity explicit in structured data where relevant. Then maintain credible mentions in places your audience actually reads and discusses your category. This is often the missing step when teams have good crawlability and schema but still see weak AI citation rates.

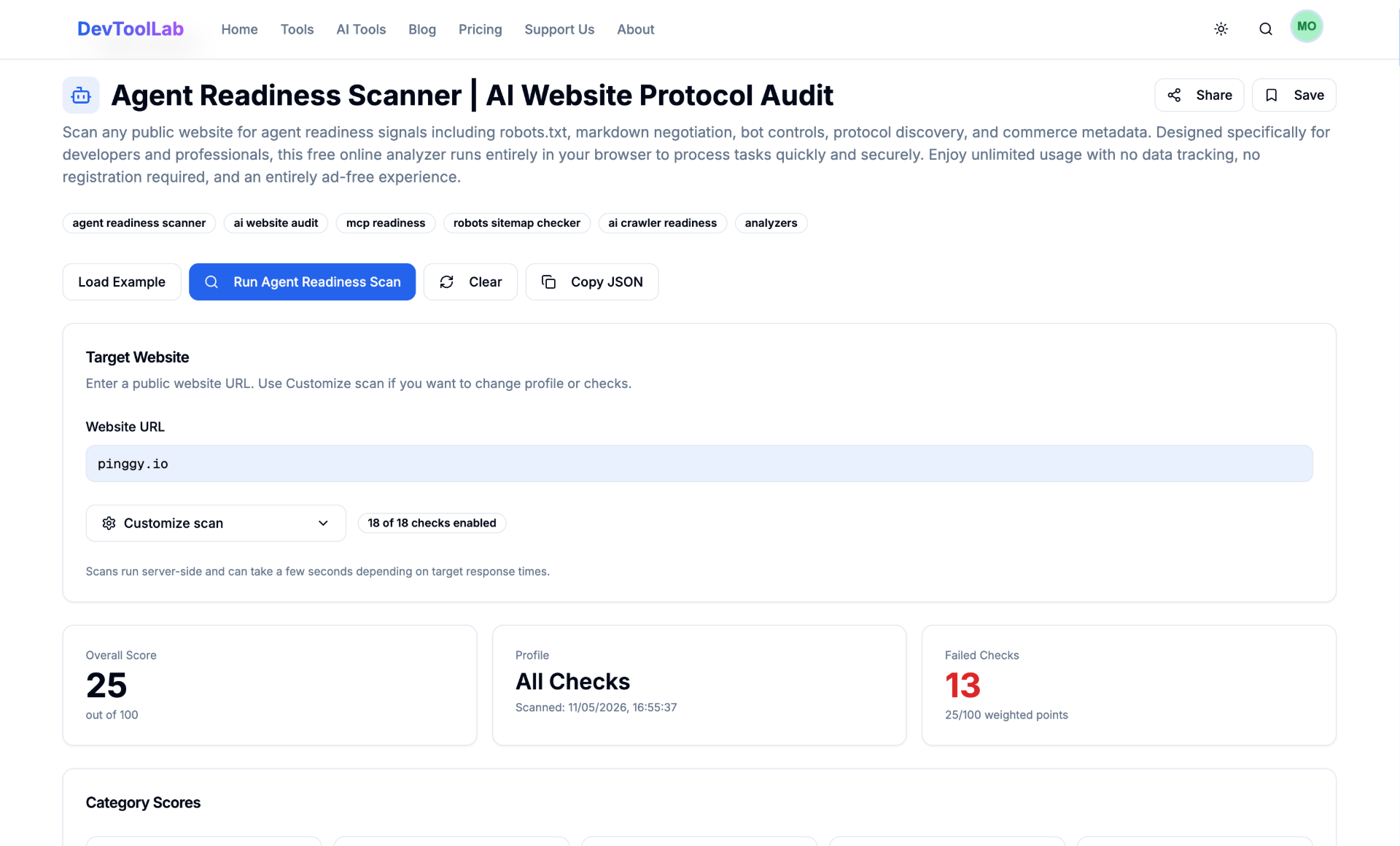

Run a Fast Agent-Readiness Audit

If you want a quick first pass before deeper technical work, run Agent Readiness Scanner. It helps surface obvious issues quickly so you can prioritize fixes and re-check progress.

Industry Consensus and Common Gaps

After reviewing major GEO articles published between August 2025 and April 2026, the pattern is consistent: strong technical SEO remains the baseline, but visibility in AI answers depends heavily on extractable content, trusted entity signals, and prompt-level measurement. Most mature guides now evaluate success using mentions, citations, share of voice, and sentiment alongside traditional rankings and traffic.

The most common gap in many “quick GEO” blog posts is the lack of a concrete off-site entity plan. They explain crawlability and schema, but do not show how to keep identity signals consistent across the wider web. In practice, that missing layer is often what separates pages that are indexed from brands that are actually cited in AI-generated answers.

Common Mistakes That Hurt AI Visibility

The most common mistake is trying to optimize for AI without fixing crawl/index basics first. The second is shipping structure that looks clean to humans but is ambiguous to machines, such as weak headings and missing schema. The third is treating this as a one-time setup instead of a recurring maintenance loop.

A simple monthly cycle works well: run readiness checks, refresh stale pages, revalidate schema, and re-test priority prompts.

Conclusion

To make your website truly agent-ready, treat it like a continuous engineering workflow: keep high-value pages crawlable and easy to discover internally, set explicit crawler policy for OAI-SearchBot and GPTBot in robots.txt, validate JSON-LD on key templates (Article, FAQPage, HowTo, Product), keep your sitemap current and submitted, add optional llms.txt where it helps documentation-heavy sections, and run

Agent Readiness Scanner on a regular schedule.

Agent readiness is not a replacement for SEO. It is the next operational layer on top of it. Teams that execute both layers consistently will capture far more of the discovery surface that now happens inside AI search.